01 — The Illusion

The Illusion of Shared Understanding

In one conversation, "AI agents" meant a smarter way to automate customer support. In another, it meant systems that could eventually replace entire workflows. And in yet another, it meant tools that assist teams — copilots, not replacements.

Same terminology. Completely different expectations.

When language aligns but meaning doesn't, it creates a quiet form of misalignment that is hard to detect — and even harder to correct.

02 — Three Realities

Three Different Realities Behind the Same Narrative

What I've been observing is not a single AI strategy, but multiple ones coexisting under the same umbrella.

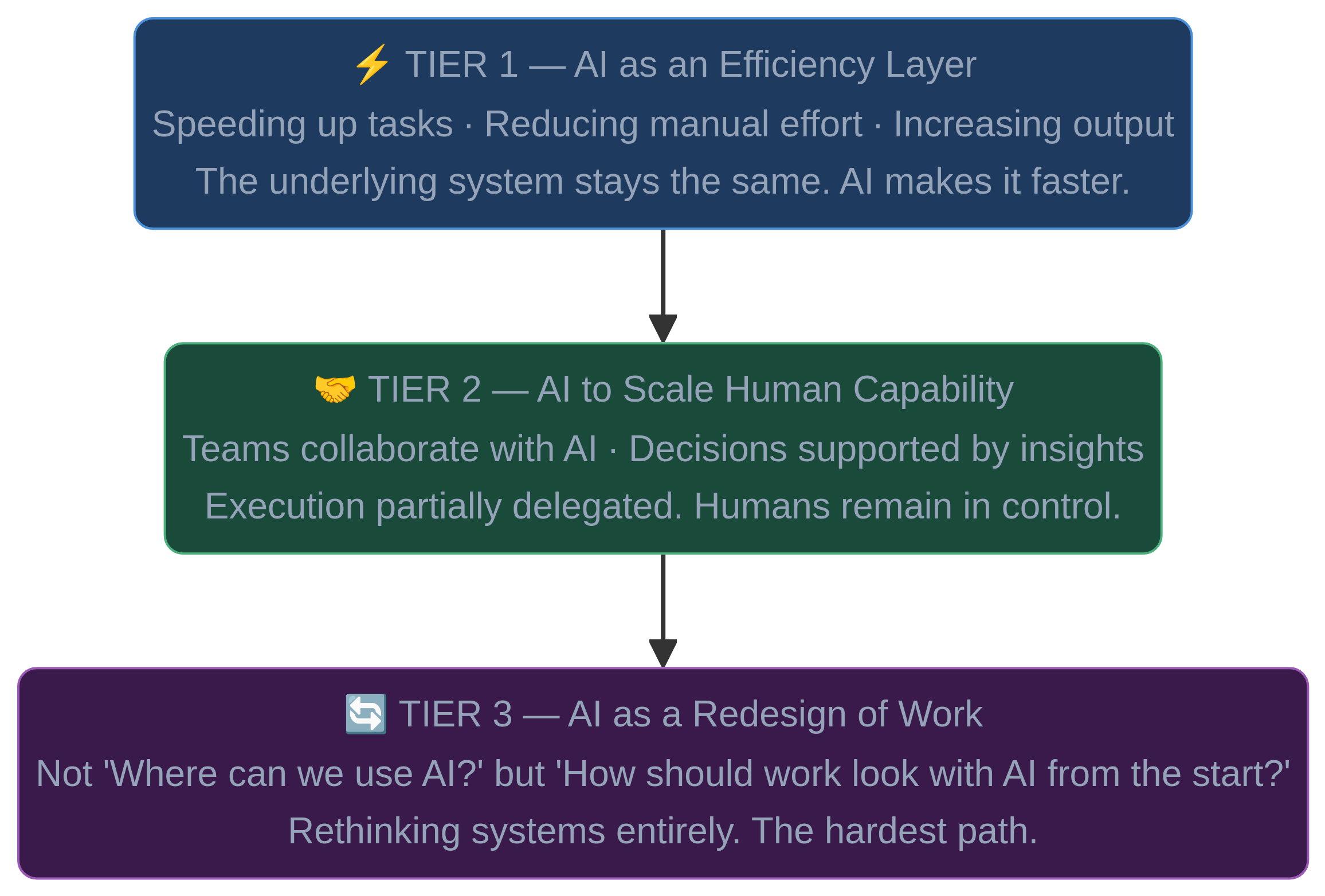

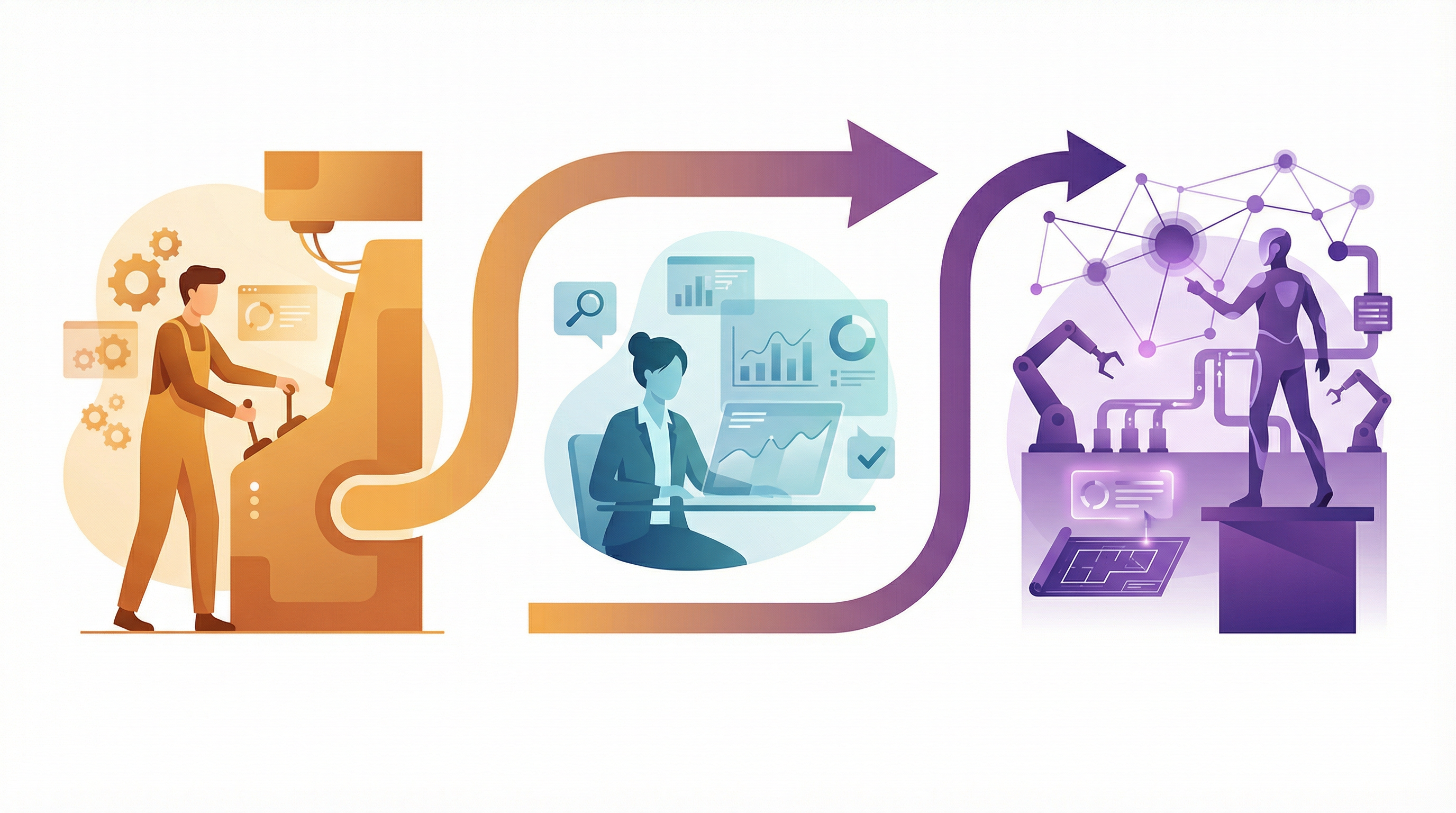

Tier 1

AI as an Efficiency Layer

- ›Speeding up tasks

- ›Reducing manual effort

- ›Increasing output

- ›The underlying system doesn't change — AI makes it faster.

Tier 2

AI to Scale Human Capability

- ›Teams collaborate with AI tools

- ›Decisions supported by generated insights

- ›Execution partially delegated

- ›Shift is visible — but anchored in human control.

Tier 3

AI as a Redesign of Work

- ›Not 'Where can we use AI?' but 'How should work look with AI from the start?'

- ›Less about tools, more about rethinking systems

- ›The hardest path — and the most transformative.

03 — Big Tech Divide

Same Words, Different Worlds

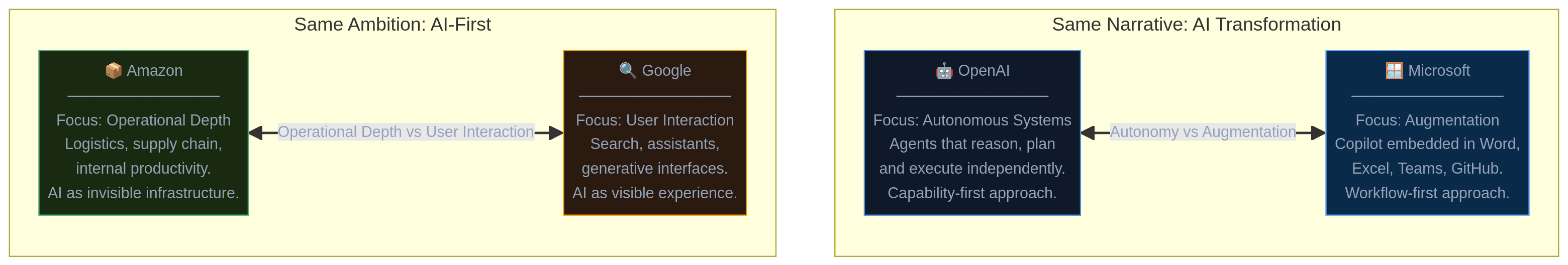

This misalignment is not just theoretical — it's visible in how large technology companies approach AI, even when they use similar language.

Same Narrative: AI Transformation

Autonomy-first

Agents that reason, plan, and execute independently. Capability-first approach — what AI can do given the right context.

Augmentation-first

Copilot embedded in Word, Excel, Teams, GitHub. Workflow-first — AI where people already work.

Same narrative: AI transformation. Different reality: autonomy vs augmentation.

Same Ambition: AI-First

Operational depth

Logistics, supply chain, internal productivity. AI as invisible infrastructure — critical to operations, hidden from users.

User interaction

Search, assistants, generative interfaces. AI as visible experience — directly tied to how users engage.

Same ambition: AI-first. Different expression: operational depth vs user interaction.

What these examples show is not that one approach is right or wrong. It's that even at the highest level, companies are making fundamentally different choices about where AI creates value, how much autonomy to allow, and how humans remain involved. And yet, from the outside, it often sounds like they are all doing the same thing.

04 — The Human Role

HITL, HOTL… or Something Deeper?

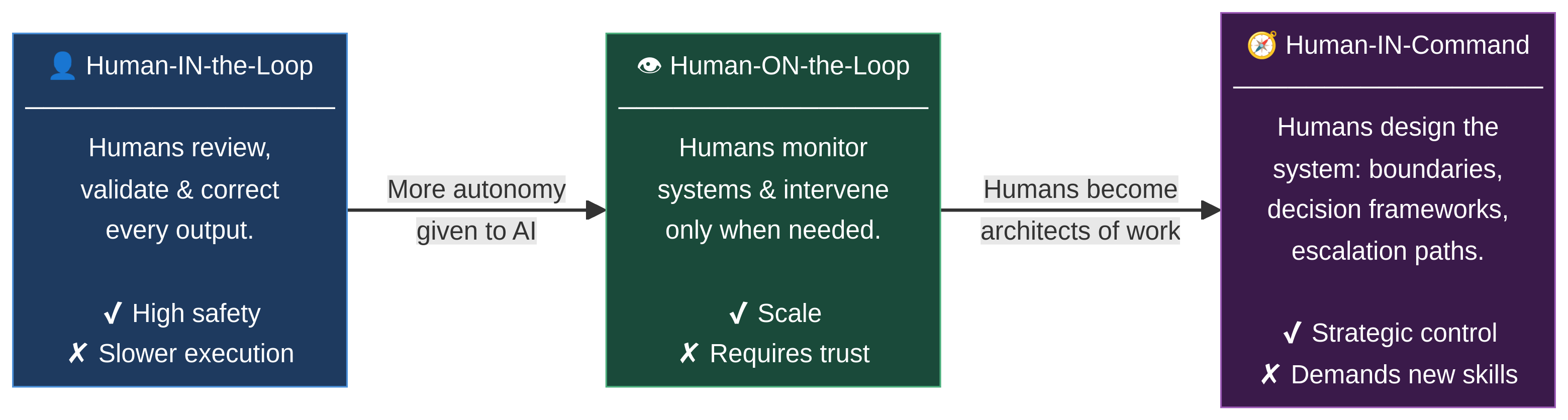

Despite all these differences, there is one thing that seems consistent: no one is truly removing humans from the equation. If anything, the opposite is happening. Companies are becoming more intentional about how humans stay involved.

We often hear terms like Human-in-the-Loop (HITL) and Human-on-the-Loop (HOTL). They sound technical, but they represent something fundamental: where responsibility sits.

Human-in-the-Loop

Humans are directly involved — reviewing, validating, and correcting. This creates safety, but also slows things down.

Human-on-the-Loop

Humans step back from execution, but remain present — monitoring systems and intervening when needed. This creates scale, but requires trust in the system.

Human-in-Command

Humans are no longer part of the flow of work — they design it. They define boundaries, decision frameworks, and escalation paths. Not doing the work. Shaping how work happens.

05 — The Shift

The Shift That Sits Underneath Everything

What's changing is not just what we do, but how we relate to work itself.

And this shift is subtle. It doesn't happen in big announcements. It happens in small changes: who makes decisions, when humans intervene, and what gets delegated.

06 — The Risk

The Risk No One Is Explicitly Addressing

The biggest challenge is not the technology. It's that we assume alignment where there isn't any.

When we say "We're investing in AI" — are we:

Each requires a different mindset, a different structure, and a different level of change.

Maybe the real question isn't "Are we adopting AI fast enough?" — but rather: "Do we actually agree on what we're building?"

AI is often framed as a technological shift. But what I keep seeing is something else. A shift in responsibility, decision-making, and the role of humans inside systems. And perhaps that's where the real work is — not in adopting AI, but in being intentional about how we design the relationship between humans and it.

07 — Self-Assessment

Which Reality Is Your Organisation Moving Toward?

Answer five questions to discover which AI strategy tier best describes where your organisation actually is — and what the next honest question should be.